Learn what Retrieval-Augmented Generation (RAG) is, how it works, and why it’s revolutionizing AI applications. This comprehensive beginner’s guide explains RAG with real-world examples and practical implementations.

Table of Contents

Open Table of Contents

- What is Retrieval-Augmented Generation (RAG)?

- The Problem RAG Solves

- How Does RAG Work?

- Real-World RAG Examples

- What RAG is Capable Of

- RAG Architecture Components

- Building a Simple RAG System

- RAG vs Fine-Tuning

- Common RAG Use Cases

- Challenges and Limitations

- Best Practices for RAG Implementation

- The Future of RAG

- Conclusion

- References

- YouTube Videos

What is Retrieval-Augmented Generation (RAG)?

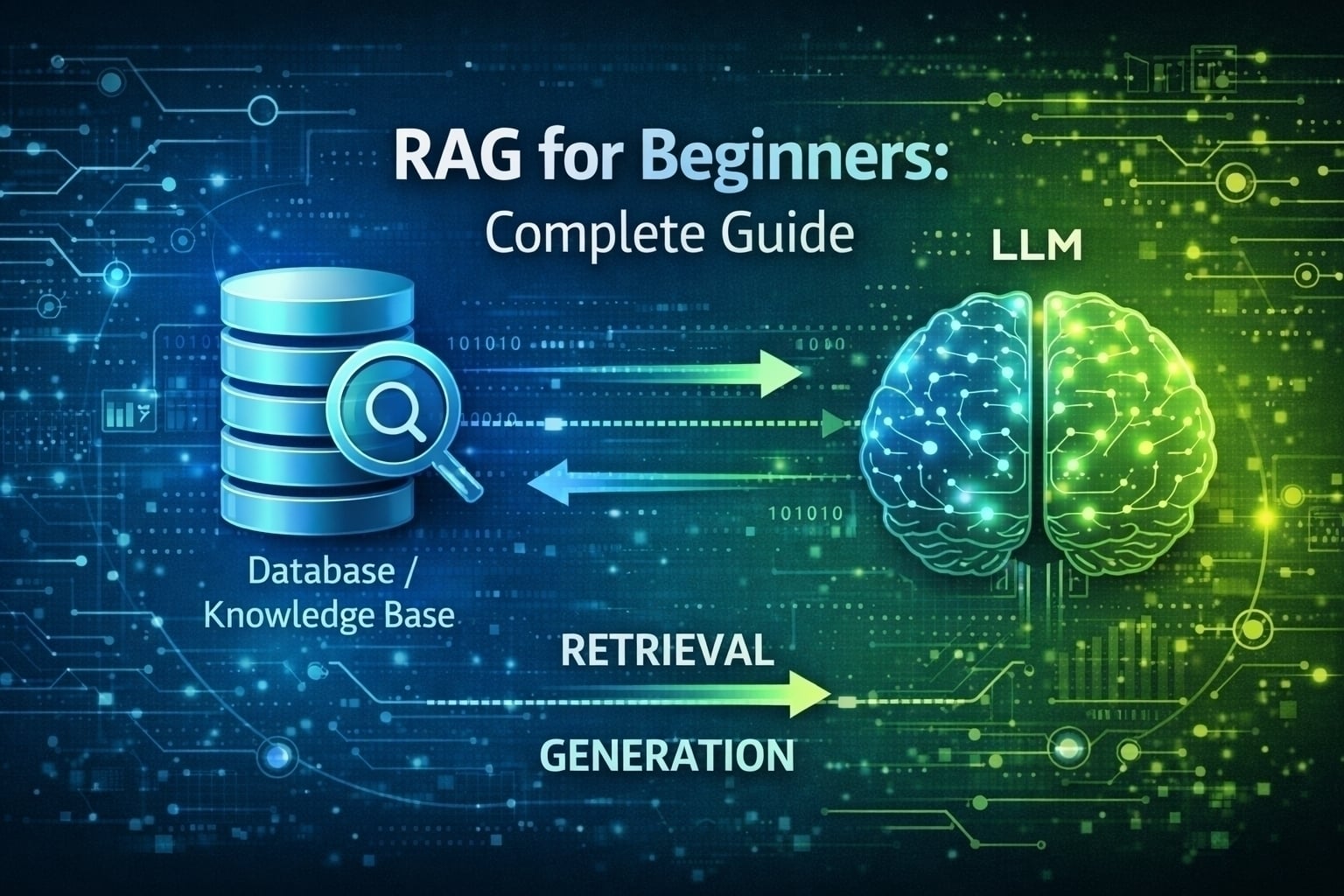

Retrieval-Augmented Generation, or RAG, is an AI technique that combines the power of large language models (LLMs) with external knowledge retrieval systems. Instead of relying solely on the information the AI was trained on, RAG allows the AI to “look up” relevant information from external sources before generating a response.

Think of RAG like a student taking an open-book exam versus a closed-book exam. In a closed-book exam (traditional LLM), the student must rely entirely on what they’ve memorized. In an open-book exam (RAG), the student can reference textbooks and notes to provide more accurate, up-to-date, and detailed answers.

The Problem RAG Solves

Large Language Models like GPT-4, Claude, or Llama have impressive capabilities, but they face several limitations:

1. Knowledge Cutoff

LLMs are trained on data up to a specific date. They don’t know about events, updates, or information that occurred after their training cutoff.

Real-World Example: If you ask ChatGPT (with a 2023 cutoff) about a software framework released in 2024, it won’t have that information. With RAG, the system can retrieve the latest documentation and provide accurate answers.

2. Hallucinations

LLMs sometimes generate plausible-sounding but incorrect information when they don’t know the answer.

Real-World Example: Ask an LLM about your company’s specific HR policies, and it might make up policies that sound reasonable but are completely wrong. RAG retrieves your actual policy documents before generating responses.

3. Domain-Specific Knowledge

General-purpose LLMs lack deep knowledge about your specific business, codebase, or proprietary information.

Real-World Example: A customer service chatbot for a telecommunications company needs to know about specific plans, pricing, and troubleshooting procedures that aren’t in the LLM’s training data.

How Does RAG Work?

RAG operates in three main steps:

Step 1: Retrieval

When a user asks a question, the system searches a knowledge base (documents, databases, websites) for relevant information.

# Simplified RAG retrieval example

user_question = "What is the return policy for electronics?"

# Search knowledge base

relevant_documents = search_knowledge_base(user_question)

# Returns: ["Electronics can be returned within 30 days...",

# "Original packaging required for returns..."]Step 2: Augmentation

The retrieved information is added to the user’s original question as context.

# Augment the prompt with retrieved context

augmented_prompt = f"""

Context from knowledge base:

{relevant_documents}

User Question: {user_question}

Please answer based on the context provided above.

"""Step 3: Generation

The LLM generates a response using both its training knowledge and the retrieved context.

# Generate response with context

response = llm.generate(augmented_prompt)

# Returns: "Based on our return policy, electronics can be

# returned within 30 days of purchase..."Real-World RAG Examples

Example 1: Customer Support Chatbot

Scenario: An e-commerce company has thousands of products and constantly updating policies.

Without RAG: The chatbot might provide outdated information or hallucinate policies.

With RAG:

- Customer asks: “What’s the warranty on the XYZ Laptop?”

- RAG retrieves the latest product specifications from the database

- LLM generates: “The XYZ Laptop comes with a 2-year manufacturer warranty covering hardware defects…”

Example 2: Legal Document Analysis

Scenario: A law firm needs to answer questions based on thousands of case files and legal documents.

Without RAG: Lawyers spend hours manually searching through documents.

With RAG:

- Lawyer asks: “Are there precedents for contract disputes in the healthcare industry?”

- RAG searches the firm’s document database

- Returns relevant case summaries with citations

- LLM synthesizes the information into a coherent answer

Example 3: Code Documentation Assistant

Scenario: A software company wants to help developers understand their large codebase.

Without RAG: Developers read through thousands of files to understand how to use internal APIs.

With RAG:

- Developer asks: “How do I implement user authentication in our framework?”

- RAG retrieves relevant code snippets, documentation, and examples

- LLM provides step-by-step instructions with actual code from the codebase

// RAG can retrieve and explain actual code patterns

@RestController

public class AuthController {

@Autowired

private AuthenticationService authService;

@PostMapping("/api/login")

public ResponseEntity<TokenResponse> login(@RequestBody LoginRequest request) {

// RAG explains: "This is our standard authentication pattern..."

return authService.authenticate(request);

}

}Example 4: Medical Information System

Scenario: Healthcare providers need quick access to patient histories and medical research.

With RAG:

- Doctor asks: “What are the latest treatment protocols for Type 2 Diabetes?”

- RAG retrieves recent medical journal articles and clinical guidelines

- LLM summarizes findings with proper citations

- Ensures information is current and evidence-based

What RAG is Capable Of

1. Up-to-Date Information

RAG systems can access real-time data, ensuring responses reflect the latest information.

2. Source Attribution

Unlike pure LLMs, RAG can cite specific documents, making it easier to verify information.

Answer: The company's remote work policy allows 3 days per week.

Source: HR Policy Document (Updated: January 2026, Page 12)3. Domain Specialization

RAG can be tailored to specific industries without retraining the entire LLM.

4. Reduced Hallucinations

By grounding responses in retrieved documents, RAG significantly reduces made-up information.

5. Privacy and Security

Sensitive data stays in your secure knowledge base rather than being sent to train external models.

6. Cost Efficiency

Cheaper than fine-tuning large models for specific use cases.

RAG Architecture Components

Vector Databases

RAG systems typically use vector databases to store and search documents efficiently.

Popular Vector Databases:

- Pinecone

- Weaviate

- ChromaDB

- Milvus

- FAISS (Facebook AI Similarity Search)

Embedding Models

Convert text into numerical vectors for similarity search.

Common Embedding Models:

- OpenAI’s

text-embedding-3-small - Sentence Transformers

- Google’s Universal Sentence Encoder

- Cohere Embed

LLM Integration

The final generation step uses models like:

- GPT-4 / GPT-3.5

- Claude (Anthropic)

- Llama 2/3

- Google’s Gemini

Building a Simple RAG System

Here’s a high-level overview of building a RAG system:

1. Prepare Your Knowledge Base

documents = [

"Product A costs $99 and has a 1-year warranty.",

"Product B costs $149 and has a 2-year warranty.",

"All products can be returned within 30 days."

]2. Create Embeddings

from sentence_transformers import SentenceTransformer

model = SentenceTransformer('all-MiniLM-L6-v2')

embeddings = model.encode(documents)3. Store in Vector Database

import chromadb

client = chromadb.Client()

collection = client.create_collection("product_info")

collection.add(

documents=documents,

embeddings=embeddings,

ids=[f"doc_{i}" for i in range(len(documents))]

)4. Query and Retrieve

query = "What is the warranty for Product B?"

query_embedding = model.encode([query])

results = collection.query(

query_embeddings=query_embedding,

n_results=2

)5. Generate Response

context = "\n".join(results['documents'][0])

prompt = f"Context: {context}\n\nQuestion: {query}\n\nAnswer:"

# Send to LLM

response = openai.ChatCompletion.create(

model="gpt-4",

messages=[{"role": "user", "content": prompt}]

)RAG vs Fine-Tuning

| Aspect | RAG | Fine-Tuning |

|---|---|---|

| Cost | Lower (no model retraining) | Higher (requires GPU resources) |

| Updates | Easy (just update documents) | Requires retraining |

| Accuracy | High for factual queries | High for style/behavior |

| Transparency | Citations available | Black box |

| Use Case | Knowledge retrieval | Task specialization |

Common RAG Use Cases

- Enterprise Search - Search across company documents, emails, and databases

- Customer Support - Answer customer questions using product manuals and FAQs

- Research Assistance - Summarize academic papers and research findings

- Code Documentation - Help developers navigate large codebases

- Healthcare - Provide medical information based on patient records and research

- Legal - Search and summarize legal documents and case law

- Education - Create personalized tutoring systems with course materials

Challenges and Limitations

1. Retrieval Quality

If the retrieval step fails to find relevant documents, the LLM’s response will be poor.

2. Context Window Limits

LLMs have limits on how much text they can process at once (e.g., 4K, 8K, 32K tokens).

3. Latency

RAG adds retrieval time to the response generation, potentially slowing down user experience.

4. Document Chunking

Breaking large documents into searchable chunks requires careful strategy.

5. Conflicting Information

If retrieved documents contradict each other, the LLM might generate confused responses.

Best Practices for RAG Implementation

1. Chunk Documents Intelligently

Split documents at natural boundaries (paragraphs, sections) rather than arbitrary character counts.

2. Use Hybrid Search

Combine vector similarity search with keyword search for better retrieval accuracy.

3. Re-ranking

After initial retrieval, use a re-ranking model to select the most relevant results.

4. Metadata Filtering

Add filters (date, category, author) to improve retrieval precision.

results = collection.query(

query_embeddings=query_embedding,

where={"category": "technical_docs", "date": {"$gte": "2025-01-01"}},

n_results=5

)5. Monitor and Iterate

Track which queries fail and continuously improve your knowledge base and retrieval strategy.

The Future of RAG

RAG is rapidly evolving with exciting developments:

- Agentic RAG: Systems that can plan multi-step retrieval strategies

- Multimodal RAG: Retrieving and processing images, videos, and audio

- Real-time RAG: Integration with live APIs and streaming data

- Personalized RAG: Adapting retrieval based on user preferences and history

Conclusion

Retrieval-Augmented Generation represents a powerful approach to making AI systems more accurate, up-to-date, and trustworthy. By combining the reasoning capabilities of LLMs with the precision of information retrieval, RAG enables applications that would be impossible with either technology alone.

Whether you’re building a customer support chatbot, a research assistant, or an enterprise knowledge management system, RAG provides a practical and cost-effective solution.

References

Research Papers

-

“Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks” - Lewis et al. (2020)

https://arxiv.org/abs/2005.11401 -

“Atlas: Few-shot Learning with Retrieval Augmented Language Models” - Izacard et al. (2022)

https://arxiv.org/abs/2208.03299 -

“In-Context Retrieval-Augmented Language Models” - Ram et al. (2023)

https://arxiv.org/abs/2302.00083

Documentation & Tutorials

-

LangChain RAG Tutorial

https://python.langchain.com/docs/use_cases/question_answering/ -

Pinecone RAG Guide

https://www.pinecone.io/learn/retrieval-augmented-generation/ -

OpenAI Embeddings Documentation

https://platform.openai.com/docs/guides/embeddings -

Hugging Face - RAG Models

https://huggingface.co/docs/transformers/model_doc/rag

Tools & Frameworks

-

LangChain - Framework for building RAG applications

https://github.com/langchain-ai/langchain -

LlamaIndex - Data framework for LLM applications

https://github.com/run-llama/llama_index -

ChromaDB - Open-source embedding database

https://www.trychroma.com/

YouTube Videos

Beginner-Friendly Tutorials

-

“RAG Explained in 5 Minutes” - IBM Technology

https://www.youtube.com/watch?v=T-D1OfcDW1M -

“Build a RAG Chatbot in 15 Minutes” - freeCodeCamp

https://www.youtube.com/watch?v=tcqEUSNCn8I -

“What is Retrieval Augmented Generation (RAG)?” - Google Cloud Tech

https://www.youtube.com/watch?v=bUHFg8CZFws

Advanced Deep Dives

-

“Advanced RAG Techniques” - DeepLearning.AI

https://www.youtube.com/watch?v=NtMvNh0WFVM -

“Building Production RAG Systems” - LangChain

https://www.youtube.com/watch?v=sVcwVQRHIc8 -

“RAG vs Fine-Tuning: Which Should You Choose?” - AI Explained

https://www.youtube.com/watch?v=YVWxbHJakgg

Implementation Tutorials

-

“Build RAG with Python, LangChain, and OpenAI” - Tech With Tim

https://www.youtube.com/watch?v=2TJxpyO3ei4 -

“Vector Databases Explained for RAG” - ByteByteGo

https://www.youtube.com/watch?v=klTvEwg3oJ4

Related Posts: